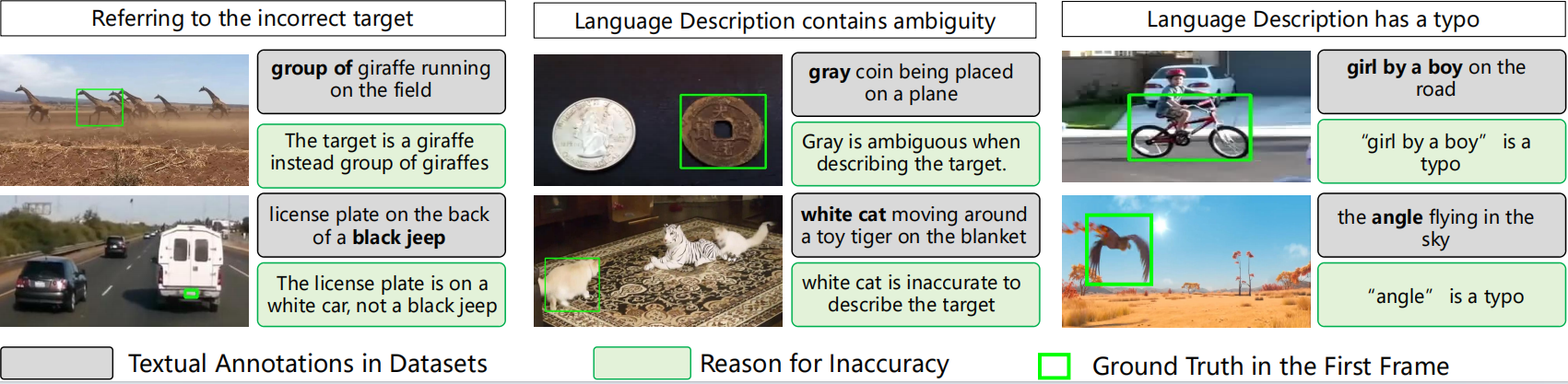

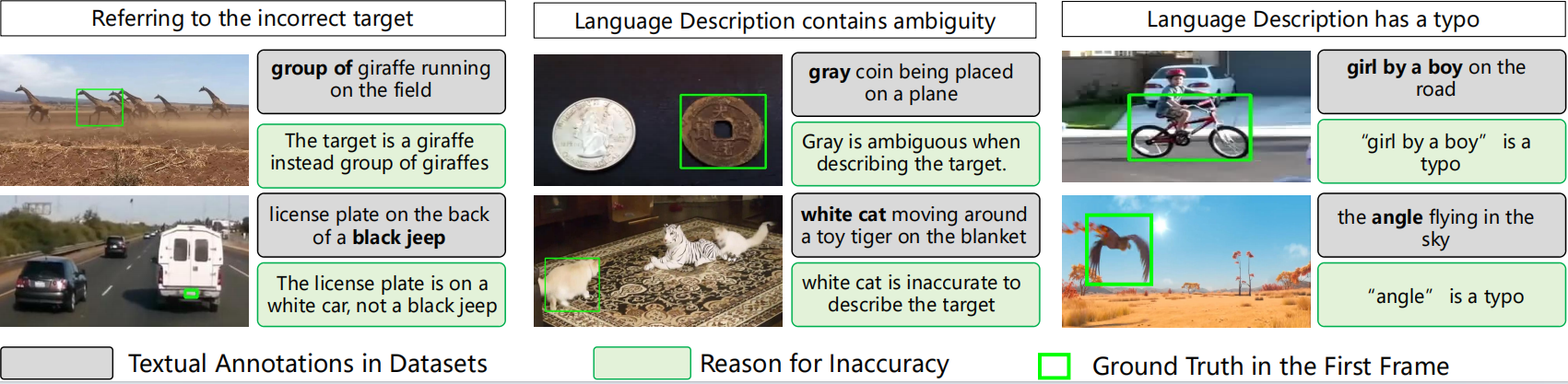

Overview of Inaccurate Language Descriptions

Existing language descriptions suffer from three issues: referring to incorrect targets, containing ambiguities, and including typographical errors.

Visual object tracking focuses on locating a target object within a video sequence based on an initial bounding box. Recently, Vision-Language (VL) trackers have been proposed to utilize additional natural language descriptions to enhance versatility in various applications. Despite this potential, VL trackers still underperform the State-of-the-Art (SoTA) visual trackers in terms of tracking accuracy. We find that this inferiority is primarily due to their heavy reliance on manual textual annotations, which include the frequent provision of ambiguous language descriptions. In this paper, we identify, for the first time, that over 10% of textual annotations in existing VL tracking datasets suffer from inaccuracies through manual evaluation. We release the manual evaluation results and the generated textual descriptions, aiming to drive advancements in VL tracking

Existing language descriptions suffer from three issues: referring to incorrect targets, containing ambiguities, and including typographical errors.

@inproceedings{chattracker,

title={ChatTracker: Enhancing Visual Tracking Performance via Chatting with Multimodal Large Language Model},

author={Sun, Yiming and Yu, Fan and Chen, Shaoxiang and Zhang, Yu and Huang, Junwei and Li, Yang and Li, Chenhui and Wang, Changbo},

booktitle={The Thirty-eighth Annual Conference on Neural Information Processing Systems (NeurIPS) 2024}

}